thoughts on the executive order for AI safety

mostly eh, slightly ohhh, and a just a tiny bit of hmm...

On Halloween the government dropped an executive order on AI, spooooky. My initial thoughts1 are that the statements themselves are pretty underwhelming but there are some concerning points.

It ultimately claims a bunch of things people need to do in the next weeks - “develop guidelines for this”, “we need a committee for that”, etc. There is mention of the timelines for things ranging from 90 to 270 days.

I read the full report, which is different from the fact sheet that most people skimmed over (not worth it). From the full report you can see how many different organizations are going to be obligated to come up with guidelines for various parts of the AI landscape. You also get a glimpse of some of the numbers they are throwing around and some interesting definitions for things:

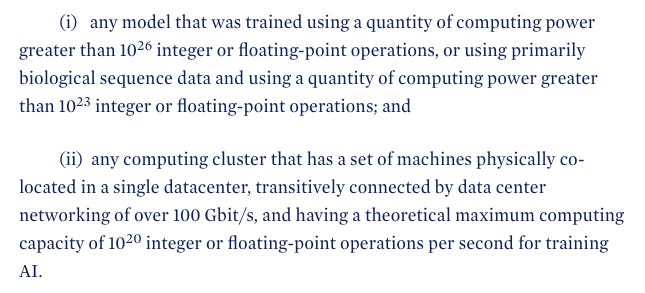

Likely the most interesting part of this report is the requirements for reporting, essentially you need to care about this if you fall under the categories below. Although it’s worth to mention that at any point these numbers could change.

Putting this into perspective helps with understanding what these statements mean and who should be concerned.

1 Nvidia H100 (at FP16) is 1,979 teraFLOPS.

and with a theoretical compute cluster threshold of 10^20 FLOPS we get:

So unless you have that in your garage (I want to be your friend if so) you don’t need to worry about explaining why you’re fine-tuning llama-7B on redacted.

But we’re not in the clear yet…

Section 4.6 talks about the issue with open-sourcing weights. And like the rest of the report, obligates someone to discuss with someone else how to go about this.

In this case the subject matter experts who will weigh in on how to approach this are private sector, academia, civil society, and other stakeholders. I have a feeling that I already know who will be saying what out of those stakeholders4…

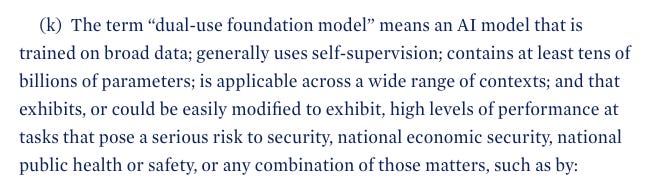

Also weirdly they keep talking about foundation models so it seems like a lot of this report doesn’t care about 90% of the ML space, maybe good?

Section 4.6 to me is much more concerning than any sort of limit on training or compute, there are groups across many industries that benefit from open source research and if you’re reading this you’re probably one of them. Depending on whats decided here, by the stakeholders, things could get really depressing.

Section 5 is good.

The report goes into job market, attracting talent, and how the landscape will change with AI technology affecting everyone. I don’t really know how much of this will become reality but the language is good.

We want talent in the US, we don’t want it anywhere else. Makes sense.

We want to promote competition. Sounds good.

We want people to partner across industry. Cool.

…

The rest of the report goes into equality and making things fair, I didn’t think anything here was that impressive, like the rest of the report a lot of this is just words with timelines. We will see soon how this actually unfolds but definitely an interesting time to live in.

My key takeaways are that the thresholds are irrelevant for hobbyists but are potentially problematic for larger organizations “just tryna try things”. I am curious how much of this is “red tape“ and how much of it is just another process we all have to follow and live with5

The real issue for me is the open source discussion. I believe in open source strongly and without it I think we are in for a few winters. Lets hope these future “discussions” are productive.

I am optimistic about this order and overall I am surprised that it’s not a total catastrophe. But lets see how it goes…

2AM speech to text notes, a bit of editing and some “research”…

That’s also about $1,500,000,000 (for just the GPUs), and yes ok I did just multiply by the MSRP and likely you’re getting bulk discount at this level but still..

I plugged this into ChatGPT and it was off by a factor of 10^2 lol

Did someone say regulatory capture?

I love accepting cookies on a website before being able to use it.